About¶

B3LB is based on the Django Python Web framework and is designed to work in large scale-out deployments with 100+ BigBlueButton nodes and high attendee join rates.

Architecture¶

To scale for a huge number of attendees it is possible to:

scale-out the B3LB API frontends

scale-out the B3LB polling workers

scale-out your BBB nodes

Features¶

multiple b3lb frontend instances

backend BBB node polling using Celery

extensive caching based on Redis

robust against high BBB node response times (i.e. due to ongoing DDoS attacks)

BBB Clustering¶

supports a high number of BBB nodes

different load balancing factors per cluster

load calculation by attendees, meetings and CPU load metrics

maintenance mode allows to disable BBB nodes gracefully

BBB Frontend API¶

Multitenancy¶

per-tenant API hostnames

start presentation injection

branding logo injection

multiple API secrets per tenant

Monitoring¶

simple health-check URL

simple json statistics URL

prometheus metrics URL

Load Calculation¶

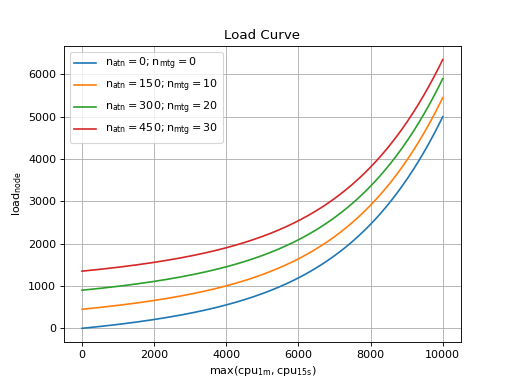

To select a BBB node for new meetings B3LB calculates a load value for the BBB nodes. The BBB node with the lowest load value is choosen. The load is based on three metrics:

number of attendees

number of meetings

cpu utilization (base 10.000)

Each of the metrics is important for deciding where to spawn new meetings. The cpu utilization depends on the current load caused by running meetings and also respects external effects of the BBB nodes. The number of meetings is important since it is an indicator that more attendees may join and cause even more load in the future.

The cpu utilization is reinforced to get a slow increase as long the cpu utilization is low and increases more and more steep. The following plot shows the load value for a BBB node depending on it’s CPU utilization (base 10.000) for different attendee and meeting counts.

(Source code, png, hires.png, pdf)

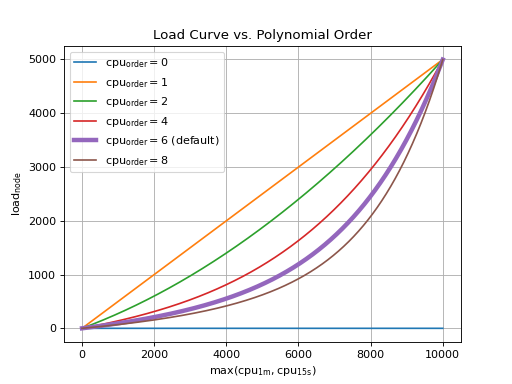

Tuning the polynomial order changes the load balancing to be more or less cpu load sensitive:

(Source code, png, hires.png, pdf)

Container Images¶

B3LB provides in three different docker image provided on Quay.io and GitHub Packages. The images can be build from source using the provided Dockerfiles.

Hint

It is intentional that there are no b3lb:latest nor b3lb-static:latest image tags available. You should always pick a explicit version for your deployment.

Warning

Since Docker has stopped to support OSS no images on Docker Hub are provided any more for b3lb ≥2.2.1!

b3lb¶

This image contains the Django files of b3lb to run the ASGI application, Celery tasks and manamgenet CLI commands.

docker pull quay.io/ibh/b3lb:2.2.2

docker pull docker.pkg.github.com/de-ibh/b3lb/b3lb:2.2.2

b3lb-static¶

Uses the Caddy webserver to provide static assets for the Django admin UI and can be used to publish per-tenant assets.

docker pull quay.io/ibh/b3lb-static:2.2.2

docker pull docker.pkg.github.com/de-ibh/b3lb/b3lb-static:2.2.2

b3lb-pypy¶

This image contains the Django files of b3lb and uses PyPy <https://www.pypy.org/>_ instead of CPython. This boosts the performance for the celery worker if the need to process a huge number of nodes or attendees.

docker pull quay.io/ibh/b3lb-pypy:2.2.2

docker pull docker.pkg.github.com/de-ibh/b3lb/b3lb-pypy:2.2.2

Warning

It is recommended to use b3lb-pypy for the celery workers, only. It is not well-tested for any other task and is known to waste memory. You should run it only with cgroup based memory limits engaged to prevent excessive memory swapping or OOM killing.

b3lb-dev¶

This is the development build of b3lb using Djangos single threaded build-in webserver. You should never use this in production.

docker pull ibhde/b3lb-dev:latest

docker pull docker.pkg.github.com/de-ibh/b3lb/b3lb-dev:latest